Image Credit: James Duncan Davidson

The year is 2026, and since the first UK milestone nearly 100 years ago with the Equal Franchise Act, women can finally vote, petition for divorce, open bank accounts and, vitally, choose what happens to their bodies… or perhaps not.

Standing on the shoulders of the waves of feminism that came before, the 21st-century woman is still battling to assert ownership of her body and image. Most alarmingly, the opposition is not just men, but their unaccountable creation, AI.

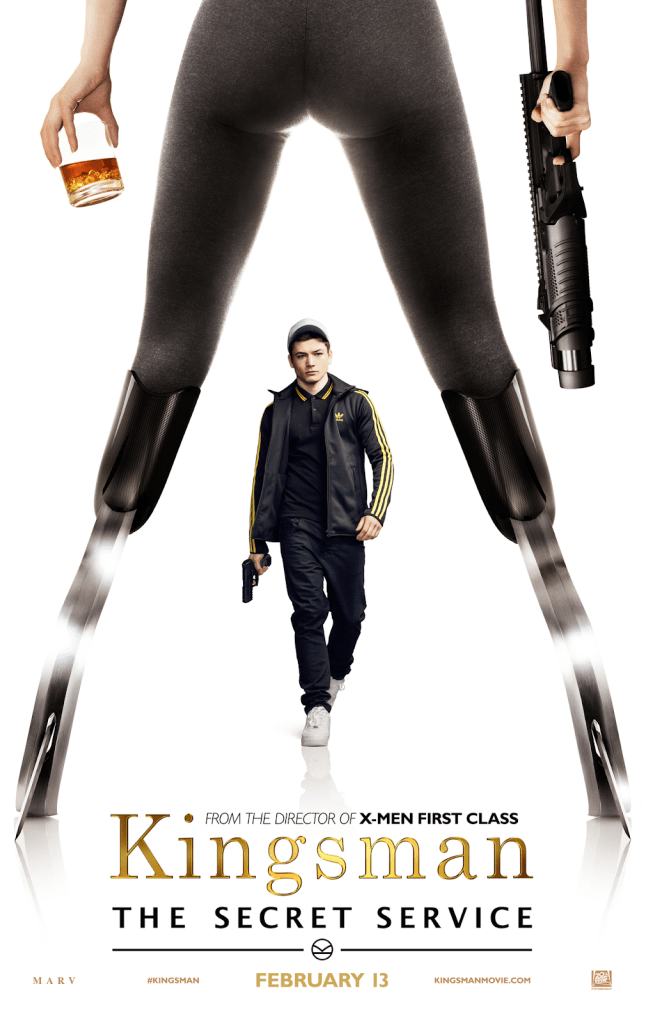

Image Credit: Kingsman: The Secret Service (2014), Matthew Vaughn

The exploitation of women’s bodies for public consumption is not a new phenomenon. A simple internet search reveals countless examples of hypersexualisation, including the recently identified “headless women” trope in Hollywood – where, for the sake of film promotion, female characters are reduced to sexualised body parts (as seen above). The pendulum of representation continues to swing, moving from earlier demands for greater media visibility to more recent efforts to challenge and reduce instances of exploitative exposure.

However, this issue has intensified in the digital age. Women are increasingly losing control over their own image, as it can now be detached, manipulated, and then displaced onto others through deepfakes.

Coined in 2017 by a Reddit user, the term “deepfake” refers to the process of digitally altering a person’s face, voice, or body so that they appear to be someone else. Initially, the process was used by the same Reddit user to superimpose the faces of celebrities onto the bodies of porn actors. In the years since, the technology has become significantly more sophisticated, with celebrity voices now able to be replicated and manipulated to say whatever the software user intends.

This development has highlighted the dangers of AI and become the subject of concern within political discourse, as doctored speeches and fabricated footage continue to spread misinformation rapidly across the globe. AI-generated or manipulated imagery has also begun to function as a political weapon, used by notable figures such as Donald Trump to ridicule opponents and shape public perception.

Yet beyond these political implications lies a more persistent and gendered threat. While the general public often engages with AI as a therapist, teacher, or advanced search engine, emerging reports reveal a growing misuse of the technology to digitally undress or sexualise women and children. These concerns have reached UK Parliament, with particular criticism directed at Grok AI, developed by Elon Musk’s xAI.

“I and my fellow Committee members have been extremely alarmed by reports of the Grok chatbot being used to create illegal, exploitative and abusive images of women and even children. This is not only an online phenomenon; we know online abuse is a gateway to offline abuse and exploitation too.”

– Helen Hayes, Chair of the Education Committee

Despite xAI’s own use policy prohibiting the depiction of individuals “in a pornographic manner”, the problem is far from contained. Countless women continue to come forward with accounts of stolen identities and manipulated imagery circulating without their consent. These cases highlight a growing form of online sexual harassment – one that remains significantly under-interrogated in UK law.

Since Ofcom began investigating xAI for potential legal breaches, the company has updated its software so that it can no longer be used to digitally undress individuals. An issue that should never have existed in the first place. Despite this intervention, the damage has already been done. Women’s safety is too often treated as an afterthought rather than a fundamental consideration in the design and development of new technologies.

This raises an important question: why are women still not prioritised when it comes to threats against their own bodies? The problem is rooted in the software’s creation – more specifically, its creators. From early pioneers such as Geoffrey Hinton and John McCarthy to present-day figures like Elon Musk and Sam Altman, many of the most influential voices in AI share one obvious similarity: their gender.

Women make up less than 30% of the global AI workforce, meaning the majority shaping technology that is rapidly integrating into daily life represent only half the population. As a consequence, AI risks creating a new “gender gap” that women must fight to close. For example, female-founded AI companies receive six times less capital than their male counterparts. Women are also less likely to adopt AI in the workplace, yet they face what Forbes coins a “competence penalty” when they do use it.

For women, the consequences of AI-use are deeply personal. A manipulated image can travel faster than any attempt to reclaim it, leaving reputational damage that is almost impossible to reverse. Unlike earlier forms of harassment, AI allows perpetrators to fabricate violations that never physically occur, yet inflict lasting psychological harm. The female body no longer needs to be present to be exploited.

If the past century has demonstrated anything, it is that women’s rights are rarely handed over willingly, they are demanded and continually renegotiated. The digital age is no exception. As artificial intelligence becomes further embedded in everyday life, the question is no longer whether safeguards should exist, but whether society will act quickly enough to build them.

Leave a comment